Imagine going to sleep and “waking up dead”. Or riddle me this, what's worse, not taking your drugs at all or taking the wrong medication? These, and then some, are real-life scenarios of people tragically falling victim to software failures as detailed later on in this article.

In Kenya, A Survey of Software Testing Processes Used by Software Developers in Kenya by Koimur, Haron Chepchieng in his MBA research at the University of Nairobi revealed that, “ about two-thirds of the software developers in Kenya do actual software testing. For those customizing or performing agency roles of re-selling already developed software, they enlisted experts from the parent software companies to do the testing. “

In this article, we have a look at the software engineering testing process. In addition, we discuss the criteria of completion of testing and lastly we lightly touch on verification and validation. We seek to find answers to a variety of questions - key of which are;

What is Software Engineering Testing

Can we safely say the software has been completely tested or should we just build software that is “good enough”?

What can the real world teach us why we need thorough software testing?

Software Engineering Testing Process

Testing more often than not abides by the following principles;

All the tests should meet the customer's requirements.

Testing should start from small parts and extend to the larger parts.

Advisably, testing should be conducted by a third party to ensure bias elimination.

Exhaustive testing is not achievable hence an optimal degree of testing should be arrived at based on the program’s risk assessment.

Types of Testing Techniques

Static testing

Involves finding defects in programs under test without code execution. This could be manual or automated. Examples of project documents under static testing include, requirement specifications, test cases, test scripts, source code and design documents. Static testing is done to avoid errors at an early stage of the Software Development LifeCycle (SDLC).

Static testing is subdivided into two categories i.e Reviews and Static Analysis. Whereas reviews can range from purely informal peer reviews to formal inspections, Static Analysis is an examination of requirement/code or design with the goal of identifying flaws that may or may not cause failures. Code compilers are static analysis tools as they point out syntax errors while not running the code per se.

The code structure can be separated into 3 aspects namely – Control flow, Data flow, and Data Structure.

Data Flow: Following the data trail of a program looking at how the data gets accessed and modified as per program instructions. Data flow analysis could help identify flaws such as unused variable definitions.

Control flow: Following how program instructions get executed. Involves looking into loops, iterations, and conditions to identify defects such as dead code.

Data Structure: This provides the roadmap on how to test data and control flows since it focuses on the data organization irrespective of code. Data structure complexity ultimately adds to code complexity.

Dynamic Testing

Involves execution of code base and checks for functional behavior of software system, memory/CPU usage and overall performance of the system through dynamic inputs both Positive testing (allowed as per requirement) and Negative Testing (Not allowed).

Dynamic testing techniques are divided into three categories namely;

Structure-based Testing

Also called White box techniques and majors on how the code structure tests and works. This brings about the concept of Code Coverage which is normally done during unit and integration testing and establishes what percentage of total code written is covered by the structural testing techniques. Code coverage, however, does not cover missed requirements i.e omitted code. Code coverage can be tested in three ways;

Statement coverage =No. of Statements of Code Executed / Total Number of Statements

Decision coverage =No. of Decision Outcomes Executed / Total Number of Decisions

Conditional/Multiple condition coverage: identify that each outcome of every logical condition in a program has been executed.

Experience-Based Techniques

As the name suggests it involves executing testing activities based on past experiences in that domain. Error Guessing and Exploratory testing fall in this category.

Specification-based Techniques

Covers test executions based on the business’ non-functional and functional specifications and are of the following types.

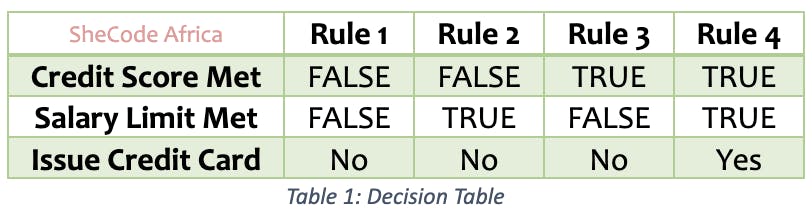

*Decision Tables *

Also called Cause-effect tables are a good way of testing a combination of inputs. One can structure the conditions applicable for the application segment under the test as a table and identify the outcomes against each one of them to reach an effective test. A decision table used to test the conditions applicable for the issuance of a credit card only when both credit score and salary limit are met is shown below.

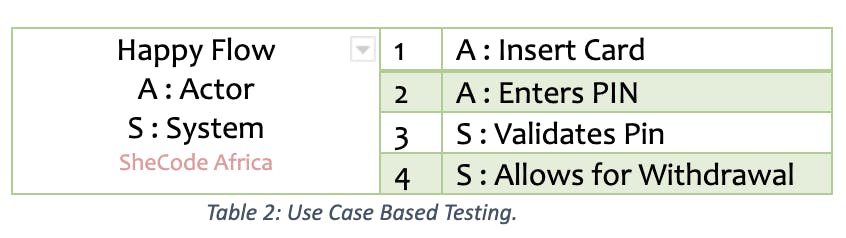

*Use case-based Testing *

Use cases are a detailed sequence of steps detailing the interaction between an Actor and the system, transaction by transaction. This technique is most effective in identifying integration defects since it helps to identify test cases that execute the system as a whole- like an actual user. In addition, use case defines the processes’ preconditions and postconditions. The ATM Machine example above can be tested via use case as shown;

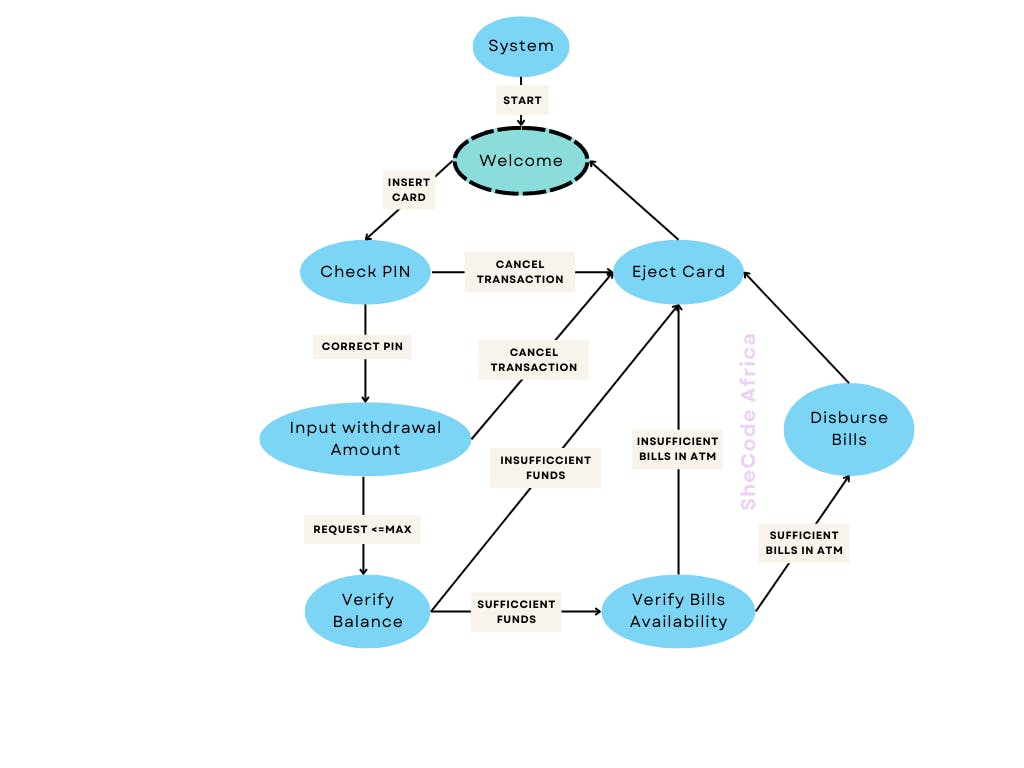

State Transition Testing

Used where the application being tested or a part of it can be treated as a finite state machine. From the example above, the ATM flow has finite states and hence qualifies to be tested using state transition testing. There are 4 basic things to consider when performing state transition testing –

States a system can achieve

Events that cause the change of state

The transitions from one state to another

Outcomes of change of state

V-Model Testing

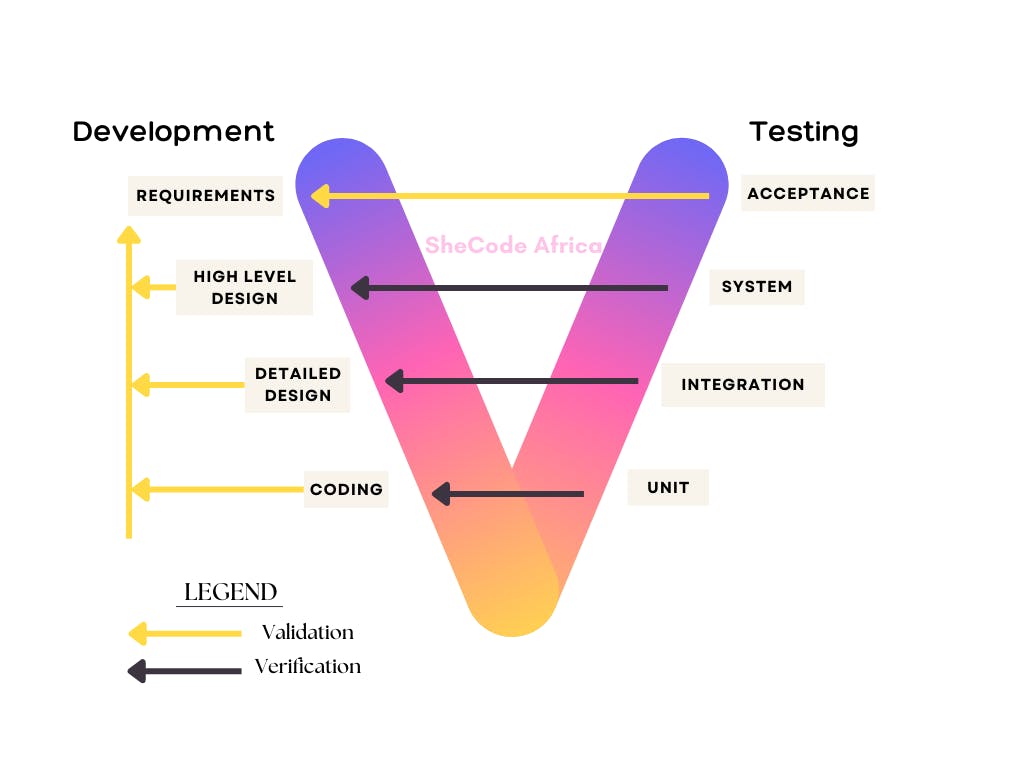

Also called the Verification and Validation model. This combination therefore makes it difficult to classify among the generic types of testing techniques since Validation falls under dynamic testing whereas Verification is a form of static testing.

Verification: “Are we building the product right?” – is a form of static testing that checks that a software achieves its goals i.e. the set of tasks that ensure software effectively and correctly implements its functions.

Validation: “Are we building the right product?” – is a form of dynamic testing that seeks to find out if a product meets its high-level requirements.

The V-model of testing was developed where, for every phase in the Development life cycle there is a corresponding Testing phase with only the Coding phase joining the two sides of the V-Model.

The left side of the model is Software Development Life Cycle – SDLC while the right side of the model is the Software Test Life Cycle – STLC

Criteria for Completion of Testing

So when are we done with testing? Or rather how do we know we have done enough testing? Two statements can be used to answer this question;

“You're never done testing; the burden simply shifts from you (the software engineer) to the end user.”

“You’re done testing when you run out of time or you run out of money.” Admittedly this statement is a bit cynical but accurate nonetheless as will be highlighted later on in this paper.

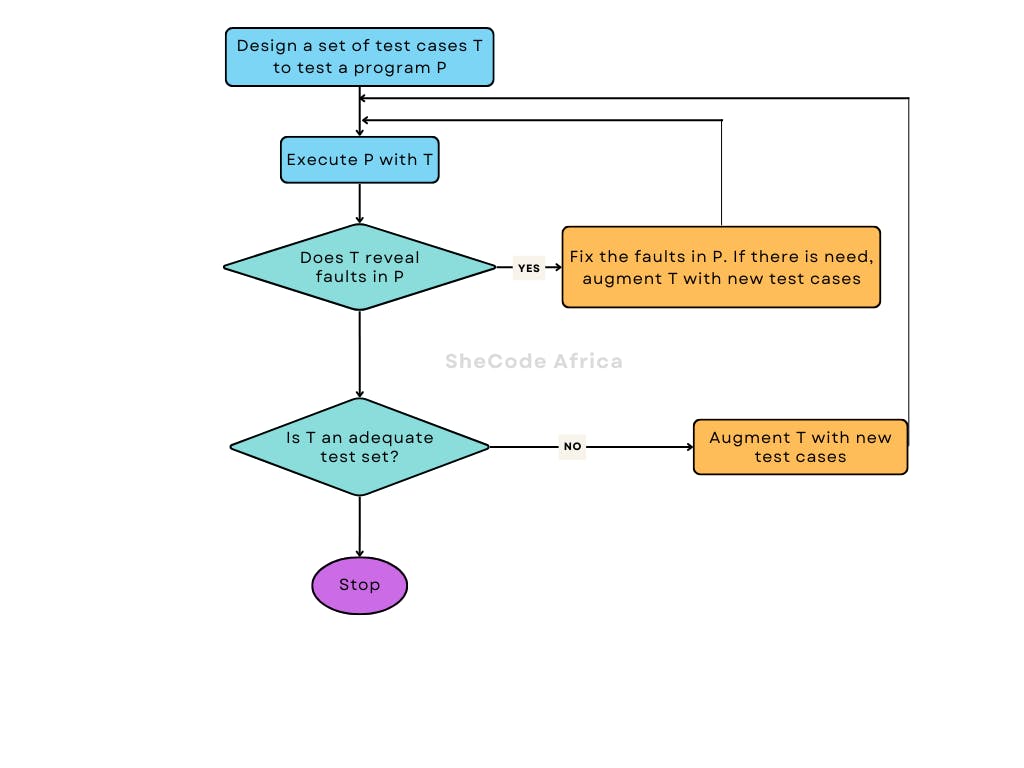

The below figure details a flowchart to aid in understanding the concept of applying and evaluating test adequacy.

That said, complete testing i.e., the discovery of all faults, desirable as it may be, is a near impossible task for a majority of systems since;

A program’s input domain may be too large to be completely used in testing a system. This includes both valid and invalid inputs. There may arise the presence of many states. Moreover, some inputs may have timing constraints making them valid at certain times and invalid at other times.

The complexity of a program’s design issues may make it almost impossible to test exhaustively. This may be through the inclusion of implicit assumptions and decisions e.g the use of global or static variables to control program execution.

The possibilities of recreating all possible known and unknown, stated and implied execution environments of the system are almost zero. This is especially manifest when system behavior is dependent on real-world variables e.g temperature, altitude, weather, and so on.

PS. Before we have a look at software failures case studies, my colleague Ondiek Elijah has done splendid pieces touching on testing. I highly recommend you go have a look at his piece on End-To-End Testing With Cypress, as well as the one he did on Integration Testing With Pytest.

Software Failures

The following cases show what happens when software fails. This is to, however, state that this article by no means explicitly associates these failures to inefficient software testing. That said, the fact that these software bugs came to the limelight after customers started using the products speaks volumes.

Mitsubishi’s 68,000 Vehicle Recall.

In 2018, about 68,000 were recalled due to software bugs that impacted braking. The bug affected the 2017-2018 Outlander Sport, the 2018 Outlander, and the 2018 Eclipse Crossover and would result in the hydraulic unit resetting due to noise from the pump motor. This led to automatic braking being canceled, wheels momentarily locking, and electric stability control momentarily canceling.

St. Mary’s Mercy Hospital

They went to bed and woke up dead. At least that’s precisely what happened to 8500 people who received treatment between Oct 25 and Dec 11 at St. Mary’s Mercy Hospital and woke up to in their mailboxes. The hospital’s recently upgraded patient monitoring system had a mapping error in the software making it assign a Code 20 (Expired) instead of a Code 01 (Discharged) and subsequently sent emails to the patients and their insurance providers.

National Health Service

What’s worse, not taking your drugs at all or taking the wrong medication? In 2016, a software fault on a clinical computer system – SystmOne had, since 2009, led to at least 300,000 heart patients either being given the wrong drugs or being misadvised due to miscalculating patients’ risk of a heart attack. The result? Many patients suffered heart attacks or strokes after being told they were at low risk, while others suffered from the side effects of taking unnecessary medication.

Conclusion

The concept of complete testing is hard to achieve, however, adequate testing still needs to be done if any of the case studies herein mentioned are anything to go by. After all, from a cost perspective, integrating bug fixes or new features is more expensive after launching an application than an early detection in the SDLC.

When all is said and done, data still shows software testing should be at the forefront of any SDLC, and yet we are not testing enough. Are we not testing enough just because we have run out of time or money or both? Let me know your thoughts in the comment section below.

That has been it from me. See you in the next article. Be Safe. Be Kind. Peace.